...

| Excerpt |

|---|

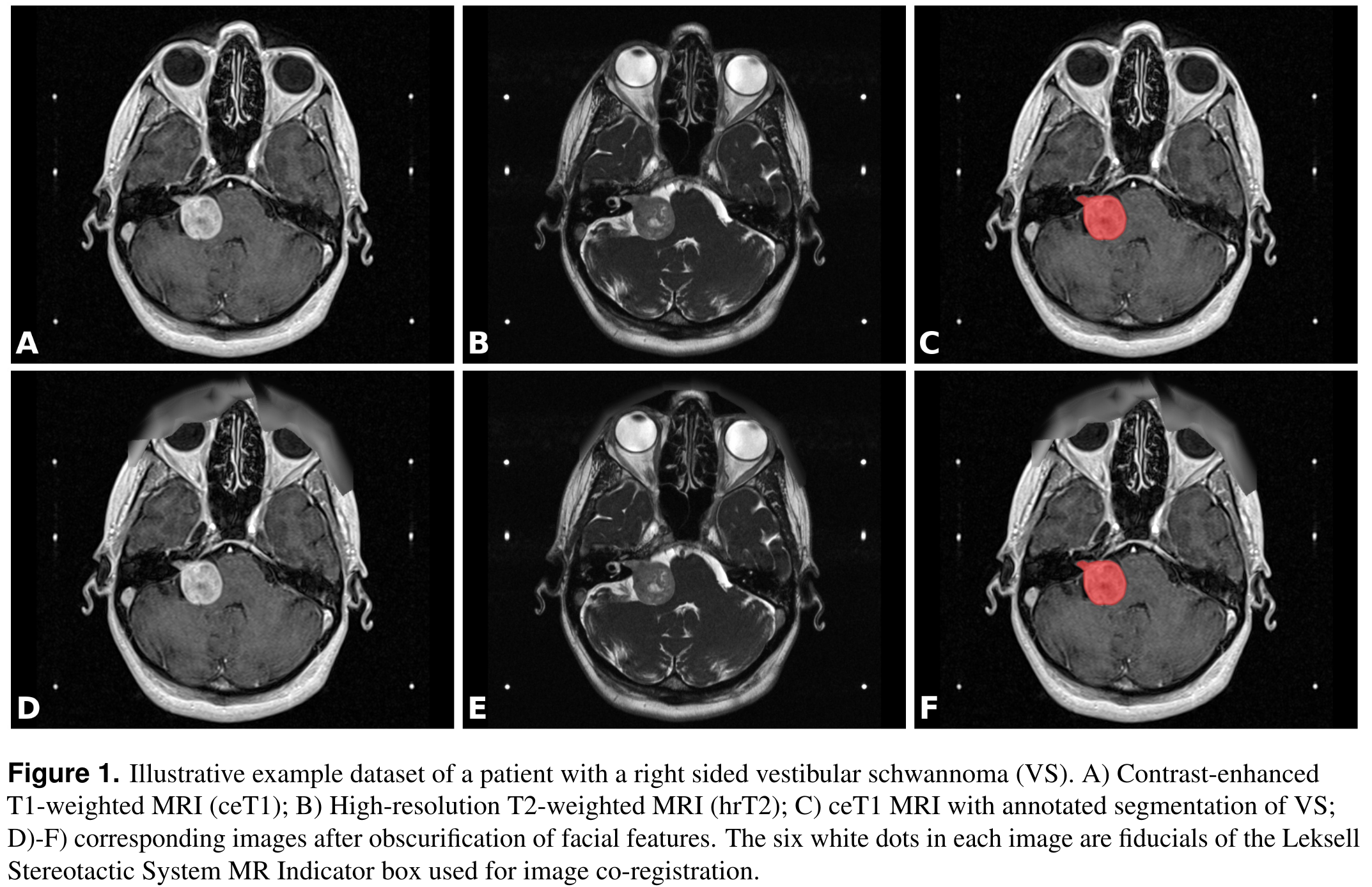

This collection contains a labeled dataset of MRI images collected on 242 consecutive patients with vestibular schwannoma (VS) undergoing Gamma Knife stereotactic radiosurgery (GK SRS). The structural images included contrast-enhanced T1-weighted (ceT1) images and high-resolution T2-weighted (hrT2) images. Each imaging dataset is accompanied by the patient’s radiation therapy (RT) dataset including the RTDose, RTStructures and RTPlan. Additionally, registration matrices (.tfm format) and segmentation contour lines (JSON format) are provided and described in the Versions tab below. All structures were manually segmented in consensus by the treating neurosurgeon and physicist using both the ceT1 and hrT2 images. The value of this collection is to provide clinical image data including fully annotated tumour segmentations to facilitate the development and validation of automated segmentation frameworks. It may also be used for research relating to radiation treatment. Imaging data from consecutive patients with a single sporadic VS treated with GK SRS between the years of 2012 and 2018 were screened for the study. All adult patients older than 18 years with a single unilateral VS treated with GK SRS were eligible for inclusion in the study, including patients who had previously undergone operative surgical treatment. In total, 248 patients met these initial inclusion criteria including 51 patients who had previously undergone surgery. Patients were only included in the study if their pre-treatment image acquisition dataset was complete; 4 patients were thus excluded because of incomplete datasets and 2 further patients were excluded because the tumour was not completely contained in the image field of view. The images were obtained on a 32-channel Siemens Avanto 1.5T scanner using a Siemens single-channel head coil.

All manual segmentations were performed using Gamma Knife planning software (Leksell GammaPlan, Elekta, Sweden) that employs an in-plane semiautomated segmentation method. Using this software, each axial slice was manually segmented in turn. Registration matrices and JSON contoursRegistration Matrices: For each subject and each modality there is a text file named inv_T1_LPS_to_T2_LPS.tfm or inv_T2_LPS_to_T1_LPS.tfm. The files specify affine transformation matrices that can be used to co-register the T1 image to the T2 image and vice versa. The file format is a standard format defined by the Insight Toolkit (ITK) library. The matrices are the result of the co-registration of fiducials of the Leksell Stereotactic System MR Indicator box into which the patient’s head is fixed during image acquisition. The localization of fiducials and co-registration was performed automatically by the LeksellGammaPlan software. Contours: For each subject and each modality there is a text file named contours.json. These contour files in the T1 and T2 folder contain the contour points of the segmented structures in JavaScript Object Notation (JSON) format, mapped in the coordinate frames of the T1 image and the T2 image, respectively. There can be small differences between the contour points of the RTSTRUCT and the contour points of the JSON files as explained in the following: Related softwarePlease see the github respository link which contains a script to organize the downloaded data into a more convenient folder structure and a script to perform co-registration based on the .tfm files and to convert the downloaded DICOM images and segmentations JSON contours into NIFTI format. Moreover, the repository contains an algorithm for automatic segmentation of VS with deep learning, adapted to this data set. The applied neural network is based on the 2.5D UNet described in "An artificial intelligence framework for automatic segmentation and volumetry of vestibular schwannomas from contrast-enhanced T1-weighted and high-resolution T2-weighted MRI" (Shapey, et al., 2021) and has been adapted to yield improved segmentation results. Our implementation uses MONAI, a freely available, PyTorch-based framework for deep learning in healthcare imaging (Project MONAI). This new implementation was devised to provide a starting point for researchers interested in automatic segmentation using state-of-the art deep learning frameworks for medical image processing. |

Acknowledgements

This work was supported by Wellcome Trust (203145Z/16/Z, 203148/Z/16/Z, WT106882), EPSRC (NS/A000050/1, NS/A000049/1) and MRC (MC_PC_180520) funding. Tom Vercauteren is also supported by a Medtronic/Royal Academy of Engineering Research Chair (RCSRF1819\7\34).

| Localtab Group | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

...